As artificial intelligence becomes more integrated into everyday work and applications, the need for strong safety measures grows just as fast. At the first-ever LlamaCon 2025, Meta unveiled a powerful set of tools designed to protect its open-source LLaMA models from misuse. This marks a serious move toward responsible AI deployment, especially as the company expands access to LLaMA 3, its most advanced language model yet.

The spotlight of this announcement was on a new security-focused framework called LlamaFirewall. Designed for developers using generative AI in real-world applications, this toolkit works in real time to detect and block threats like prompt injection attacks or attempts to jailbreak the model. It also includes an alignment checker to make sure AI agents don’t drift from their intended roles, and a feature called CodeShield, which analyzes code before it is generated to prevent the output of insecure or exploitable scripts.

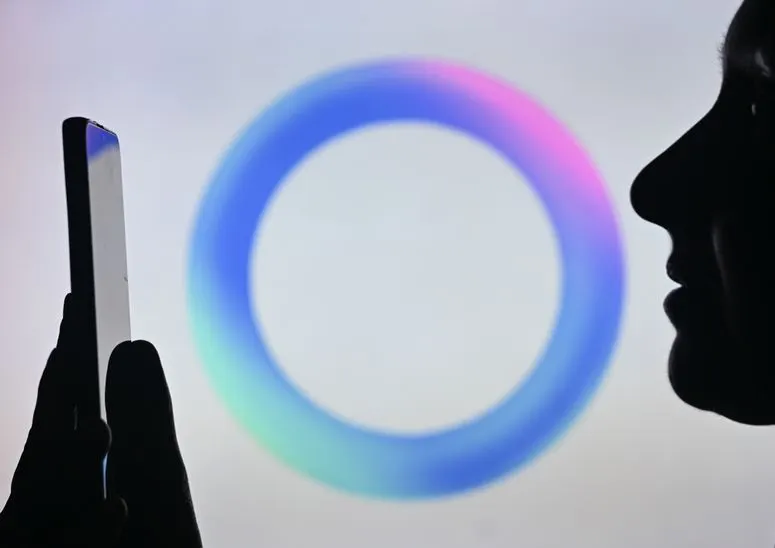

Meta also upgraded its content moderation tool, Llama Guard, which now enters its fourth version. What makes Llama Guard 4 notable is its ability to moderate not just text, but also image-based content offering a multimodal layer of safety. This addition shows Meta’s growing focus on handling more complex forms of content across platforms.

Alongside these tools, Meta introduced CyberSecEval 4, a benchmark suite that tests AI models under real-world cybersecurity scenarios. One of its highlights is AutoPatchBench, which simulates how well a model can identify and fix security vulnerabilities in code. These benchmarks are essential not just for Meta, but for the broader research community, offering transparent ways to measure how safe an AI model really is.

In a more privacy-focused move, Meta is also testing Private Processing within WhatsApp. This new feature allows AI to function directly on users’ devices, keeping message data private and inaccessible to Meta itself. While still in beta, it suggests a future where powerful AI features don’t come at the cost of user trust.

All of these initiatives respond to a growing reality: as AI becomes more capable, its potential risks expand. Without the right safeguards, tools meant to help can quickly become attack surfaces—whether through unsafe code generation, manipulated outputs, or privacy breaches. Meta’s open-source approach, sharing its tools and benchmarks freely via GitHub, underscores a larger push for transparency and collaboration in the AI community.

This shift toward combining AI power with operational responsibility is becoming the new standard. In the past, success was defined by how smart or fast a model was. Now, it’s about how responsibly it can be used in the real world—how well it protects users, respects privacy, and aligns with ethical goals.

Want to Learn How to Use AI Responsibly in Your Work?

If you’re exploring how to use AI tools like LLaMA, ChatGPT, or Claude in your own projects especially in content creation—consider taking the course AI-Powered Content Creation for Brands and Products by PromptHero.

This course is built for marketers, creators, and entrepreneurs who want to make AI a real part of their workflow. Turn ordinary product photos into content that drives sales. Master AI tools to create weeks of content in minutes. Learn to leverage cutting-edge AI features to transform your content creation process – from static photos to dynamic videos, perfect segmentation, and professional editing that drives engagement.

Deja un comentario